can S3client from region upload to bucket of another region?

Triggering a Lambda by uploading a file to S3 is one of the introductory examples of the service. As a tutorial, it tin can be implemented in under 15 minutes with canned code, and is something that a lot of people observe useful in existent life. But the tutorials that I've seen only expect at the "happy path": they don't explore what happens (and how to recover) when things go wrong. Nor do they wait at how the files become into S3 in the first place, which is a key role of any application design.

This mail is a "deep dive" on the standard tutorial, looking at architectural decisions and operational concerns in improver to the simple mechanics of triggering a Lambda from an S3 upload.

Architecture

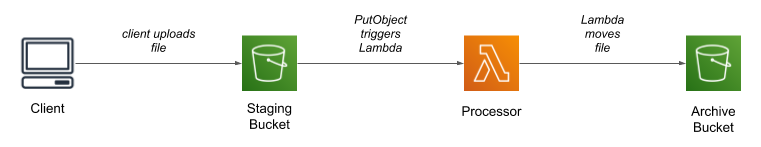

Every bit the title says, the architecture uses two buckets and a Lambda function. The customer uploads a file to the first ("staging") bucket, which triggers the Lambda; after processing the file, the Lambda moves information technology into the 2nd ("archive") bucket.

Why two buckets?

From a strictly technical perspective, there'due south no demand to have two buckets. You can configure an S3 trigger to fire when a file is uploaded with a specific prefix, and could motion the file to a different prefix subsequently processing, so could keep everything within a single bucket. You might also question the betoken of the annal bucket entirely: one time the file has been candy, why keep it? I think there are several answers to this question, each from a different perspective.

Start, every bit always, security: 2 buckets minimize blast radius. Clients require privileges to upload files; if y'all accidentally grant besides broad a scope, the files in the staging bucket might be compromised. However, since files are removed from the staging bucket later they're processed, at any indicate in time that bucket should take few or no files in it. This assumes, of course, that those besides-broad privileges don't also allow access to the archive saucepan. One mode to protect against that is to adopt the habit of narrowly-scoped policies, that grant permissions on a single bucket.

Configuration direction besides becomes easier: with a shared bucket, everything that touches that saucepan — from IAM policies, to S3 life-wheel policies, to application code — has to be configured with both a bucket proper name and a prefix. By going to ii buckets, y'all can eliminate the prefix (although the awarding might still use prefixes to dissever files, for example past customer ID).

Failure recovery and bulk uploads are also easier when you separate new files from those that have been processed. In many cases it's a simple matter of moving the files back into the upload bucket to trigger reprocessing.

How do files get uploaded?

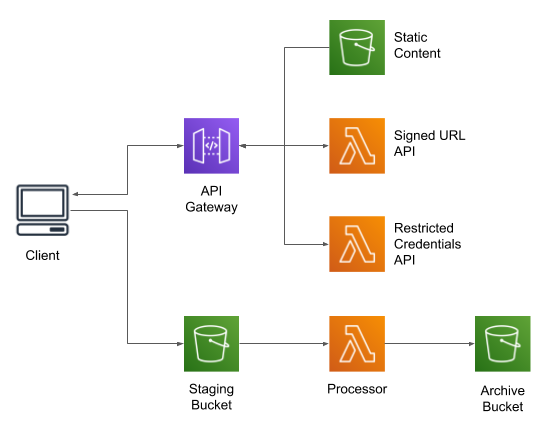

All of the examples that I've seen assume that a file magically arrives in S3; how information technology gets there is "left as an exercise for the reader." Nonetheless, this can be quite challenging for real-earth applications, peculiarly those running in a user's browser. For this post I'm going to focus on two approaches: direct PUT using a presigned URL, and multi-part upload using the JavaScript SDK.

Pre-signed URLs

Amazon Web Services are, in fact, web services: every operation is implemented as an HTTPS request. For many services yous don't think about that, and instead interact with the service via an Amazon-provided software development kit (SDK). For S3, however, the spider web-service nature is closer to the surface: y'all can skip the SDK and download files with Get, simply equally when interacting with a website, or upload files with a PUT or Post.

The one caveat to interacting with S3, assuming that y'all haven't simply exposed your bucket to the world, is that these GETs and PUTs must exist signed, using the credentials belonging to a user or role. The bodily signing process is rather circuitous, and requires admission credentials (which you don't want to provide to an arbitrary client, lest they be copied and used for nefarious purposes). Every bit an culling, S3 allows yous to generate a pre-signed URL, using the credentials of the application generating the URL.

Using the the S3 SDK, generating a presigned URL is like shooting fish in a barrel: here'due south some Python code (which might be run in a web-service Lambda) that will create a pre-signed URL for a PUT request. Annotation that you have to provide the expected content type: a URL signed for text/plain tin't exist used to upload a file with blazon epitome/jpeg.

s3_client = boto3.customer('s3') params = { 'Bucket': saucepan, 'Fundamental': key, 'ContentType': content_type } url = s3_client.generate_presigned_url('put_object', params) If you run this lawmaking you'll become a long URL that contains all of the information needed to upload the file:

https://example.s3.amazonaws.com/example?AWSAccessKeyId=AKIA3XXXXXXXXXXXXXXX&Signature=M9SbH6zl9LpmM6%2F2POBk202dWjI%3D&content-type=text%2Fplain&Expires=1585003481

Of import caveat: only because you can generate a presigned URL doesn't mean the URL will exist valid. For this case, I used bogus access credentials and a referred to a bucket and key that (probably) doesn't exist (certainly not one that I control). If y'all paste it into a browser, yous'll become an "Access Denied" response (albeit due to expiration, non invalid credentials).

To upload a file, your client first requests the presigned URL from the server, then uses that URL to upload the file (in this example, running in a browser, selectedFile was populated from a file input field and content is the result of using a FileReader to load that file).

async function uploadFile(selectedFile, content, url) { panel.log("uploading " + selectedFile.name); const request = { method: 'PUT', mode: 'cors', cache: 'no-cache', headers: { 'Content-Type': selectedFile.type }, torso: content }; permit response = await fetch(url, request); console.log("upload status: " + response.status); } Multi-part uploads

While presigned URLs are convenient, they accept some limitations. The first is that objects uploaded by a unmarried PUT are limited to 5 GB in size. While this may be larger than anything yous expect to upload, in that location are some utilise cases that will exceed that limit. And even if you are under that limit, large files can still be a problem to upload: with a fast, 100Mbit/sec network dedicated to one user, information technology will take most two minutes to upload a 1 GB file — two minutes in which your user's browser sits, apparently unresponsive. And if there's a network hiccup in the middle, you have to start the upload over again.

A better alternative, even if your files aren't that large, is to use multi-part uploads with a client SDK. Under the covers, a multi-part upload starts by retrieving a token from S3, then uses that token to upload chunks of the files (typically effectually ten MB each), and finally marks the upload as complete. I say "nether the covers" because all of the SDKs have a high-level interface that handles the details for you lot, including resending any failed chunks.

However, this has to be done with a customer-side SDK: yous can't pre-sign a multi-part upload. Which ways you must provide credentials to that client. Which in turn ways that you want to limit the telescopic of those credentials. And while you can apply Amazon Cognito to provide express-time credentials, you tin can't utilise information technology to provide limited-scope credentials: all Cognito authenticated users share the same office.

To provide limited-scope credentials, you need to assume a role that has full general privileges to access the saucepan while applying a "session" policy that restricts that access. This can be implemented using a Lambda equally an API endpoint:

sts_client = boto3.client('sts') role_arn = bone.environ['ASSUMED_ROLE_ARN'] session_name = f"{context.function_name}-{context.aws_request_id}" response = sts_client.assume_role( RoleArn=role_arn, RoleSessionName=session_name, Policy=json.dumps(session_policy) ) creds = response['Credentials'] render { 'statusCode': 200, 'headers': { 'Content-Blazon': 'application/json' }, 'body': json.dumps({ 'access_key': creds['AccessKeyId'], 'secret_key': creds['SecretAccessKey'], 'session_token': creds['SessionToken'], 'region': os.environ['AWS_REGION'], 'saucepan': bucket }) } Fifty-fifty if you're non not familiar with the Python SDK, this should exist adequately easy to follow: STS (the Security Token Service) provides an assume_role method that returns credentials. The specific office isn't particularly of import, as long as it allows s3:PutObject on the staging bucket. Still, to restrict that role to allow uploading a single file, y'all must use a session policy:

session_policy = { 'Version': '2012-10-17', 'Statement': [ { 'Effect': 'Allow', 'Action': 's3:PutObject', 'Resource': f"arn:aws:s3:::{bucket}/{key}" } ] } On the client side, you would use these credentials to construct a ManagedUpload object, and then

use it to perform the upload. Every bit with the prior example, selectedFile is prepare using an input

field. Different the prior example, there's no need to explicitly read the file'south contents into a buffer; the

SDK does that for yous.

async office uploadFile(selectedFile, accessKeyId, secretAccessKey, sessionToken, region, bucket) { AWS.config.region = region; AWS.config.credentials = new AWS.Credentials(accessKeyId, secretAccessKey, sessionToken); console.log("uploading " + selectedFile.name); const params = { Bucket: bucket, Key: selectedFile.name, ContentType: selectedFile.blazon, Body: selectedFile }; let upload = new AWS.S3.ManagedUpload({ params: params }); upload.on('httpUploadProgress', office(evt) { console.log("uploaded " + evt.loaded + " of " + evt.total + " bytes for " + selectedFile.proper noun); }); render upload.promise(); } If yous use multi-part uploads, create a bucket life-cycle dominion that deletes incomplete uploads. If you don't do this, you might find an ever-increasing S3 storage bill for your staging bucket that makes no sense given the small number of objects in the bucket list. The crusade is interrupted multi-office uploads: the user airtight their browser window, or lost network connectivity, or did something else to foreclose the SDK from marking the upload complete. Unless yous accept a life-cycle rule, S3 will go on (and bill for) the parts of those uploads, in the hope that someday a client will come back and either complete them or explicitly abort them.

You'll also need a CORS configuration on your bucket that (i) allows both PUT and Mail requests, and (ii) exposes the ETag header.

Work At Chariot

If yous value continual learning, a culture of flexibility and trust, and beingness surrounded past colleagues who are curious and love to share what they're learning (with manufactures like this one, for case!) nosotros encourage you to join our team. Many positions are remote — browse open positions, benefits, and acquire more than near our interview procedure below.

Careers

A prototypical transformation Lambda

In this department I'yard going to call out what I consider "best practices" when writing a Lambda. My implementation language of choice is Python, but the aforementioned ideas employ to any other language.

The Lambda handler

I like Lambda handlers that don't exercise a lot of work inside the handler function, so my prototypical Lambda looks similar this:

import boto3 import logging import os import urllib.parse archive_bucket = bone.environ['ARCHIVE_BUCKET'] logger = logging.getLogger(__name__) logger.setLevel(logging.DEBUG) s3_client = boto3.client('s3') def lambda_handler(effect, context): print(json.dumps(event)) for record in event.go('Records', []): eventName = record['eventName'] saucepan = record['s3']['bucket']['proper name'] raw_key = record['s3']['object']['key'] key = urllib.parse.unquote_plus(raw_key) try: logger.info(f"processing s3://{bucket}/{fundamental}") process(bucket, primal) logger.info(f"moving s3://{bucket}/{key} to s3://{archive_bucket}/{central}") annal(bucket, cardinal) except Exception as ex: logger.exception(f"unhandled exception processing s3://{bucket}/{central}") def procedure(bucket, key): // do something here pass def archive(saucepan, central): s3_client.copy( CopySource={'Bucket': bucket, 'Key': key }, Bucket=archive_bucket, Key=key) s3_client.delete_object(Bucket=bucket, Key=key) Breaking information technology down:

- I get the name of the archive bucket from an environs variable (the name of the upload saucepan is part of the invocation upshot).

- I'thou using the Python logging module for all output. Although I don't do information technology here, this lets me write JSON log messages, which are easier to use with CloudWatch Logs Insights or import into Elasticsearch.

- I create the S3 client outside the Lambda handler. In general, you desire to create long-lived clients outside the handler so that they tin be reused across invocations. However, at the same fourth dimension you don't want to establish network connections when loading a Python module, considering that makes it difficult to unit exam. In the case of the Boto3 library, however, I know that it creates connections lazily, so in that location'due south no harm in creating the client as part of module initialization.

- The handler role loops over the records in the event. Ane of the common mistakes that I see with result-handler Lambdas is that they assume in that location will only be a single record in the event. And that may exist right 99% of the time, but you still need to write a loop.

- The S3 keys reported in the effect are URL-encoded; you need to decode the central before passing it to the SDK. I use urllib.parse.unquote_plus(), which in addition to handling "percent-encoded" characters, will translate a

+into a space. - For each input file, I call the

process()function followed by theannal()role. This pair of calls is wrapped in a effort-catch block, meaning that an private failure won't touch the other files in the event. Information technology as well means that the Lambda runtime won't retry the event (which would about certainly take the same failure, and which would mean that "early on" files would be processed multiple times). - In this case

process()doesn't do anything; in the real world this is where you'd put nearly of your code. - The

annal()function moves the file as a combined copy and delete; there is no built-in "motion" operation (again, S3 is a spider web-service, so is limited to the six HTTP "verbs").

Don't store files on attached disk

Lambda provides 512 MB of temporary disk infinite. It is tempting to utilize that space to buffer your files during processing, only doing and so has two potential problems. First, it may not exist plenty space for your file. Second, and more important, you lot have to exist conscientious to continue information technology clean, deleting your temporary files even if the role throws an exception. If you don't, y'all may run out of infinite due to repeated invocations of the same Lambda environment.

There are alternatives. The first, and easiest, is to download the entire file into RAM and work with it in that location. Python makes this particularly easy with its BytesIO object, which behaves identically to on-disk files. You may nevertheless take an issue with very large files, and will have to configure your Lambda with plenty memory to agree the entire file (which may increase your per-invocation cost), but I believe that the simplified coding is worth it.

You can also piece of work with the response from an S3 GET request as a stream of bytes. The various SDK docs caution against doing this: to quote the Java SDK, "the object contents […] stream directly from Amazon S3." I suspect that this warning is more relevant to multi-threaded environments such every bit a Java application server, where a long-running asking might block access to the SDK connectedness pool. For a single-threaded Lambda, I don't see it as an result.

Of more than concern, the SDK for your language might not expose this stream in a way that's consequent with standard file-based IO. Boto3, the Python SDK, is i of the offenders: its get_object() function returns a StreamingBody, which has its own methods for retrieving data and does non follow Python'south io library conventions.

A terminal alternative is to utilize byte-range retrieves from S3, using a relatively pocket-size buffer size. I don't think this is a particularly good alternative, equally you lot will have to handle the example where your information records span retrieved byte ranges.

IAM Permissions

The principle of least privilege says that this Lambda should only be allowed to read and delete objects in the upload bucket, and write objects in the annal bucket (in addition to whatever permissions are needed to procedure the file). I similar to manage these permissions every bit split up inline policies in the Lambda's execution role (shown here as a fragment from the CloudFormation resource definition):

Policies: - PolicyName: "ReadFromSource" PolicyDocument: Version: "2012-ten-17" Statement: Effect: "Allow" Activeness: - "s3:DeleteObject" - "s3:GetObject" Resource: [ !Sub "arn:${AWS::Partition}:s3:::${UploadBucketName}/*" ] - PolicyName: "WriteToDestination" PolicyDocument: Version: "2012-10-17" Statement: Effect: "Permit" Action: - "s3:PutObject" Resource: [ !Sub "arn:${AWS::Division}:s3:::${ArchiveBucketName}/*" ] I personally prefer inline role policies, rather than managed policies, considering I similar to tailor my roles' permissions to the applications that use them. However, a existent-world Lambda will crave additional privileges in order to exercise its piece of work, and you lot may notice yourself bumping into IAM's 10kb limit for inline policies. If and then, managed policies might be your best solution, just I would withal target them at a single application.

Treatment indistinguishable and out-of-social club invocations

In any distributed system you have to be prepared for messages to be resent or sent out of order; this 1 is no different. The standard approach to dealing with this problem is to brand your handlers idempotent: writing them in such a way that you tin can call them multiple times with the same input and become the same result. This can be either very easy or incredibly hard.

On the easy side, if you know that y'all'll simply get one version of a source file and the processing step volition always produce the same output, but run it again. You may pay a petty more than for the excess Lambda invocations, but that'south nigh certainly less than you'll pay for programmer time to ensure that the procedure only happens once.

Where things go difficult is when y'all have to deal with concurrently processing different versions of the same file: version i of a file is uploaded, and while the Lambda is processing information technology, version 2 is uploaded. Since Lambdas are spun up every bit needed, you lot're likely to have a race condition with two Lambdas processing the same file, and whichever finishes last wins. To deal with this you have to implement some way to keep track of in-process requests, possibly using a transactional database, and delay or arrest the 2d Lambda (and annotation that a delay will turn into an arrest if the Lambda times-out).

Another alternative is to carelessness the simple "2 buckets" pattern, and turn to an approach that uses a queue. The claiming here is providing concurrency: if you apply a queue to unmarried-thread file processing, y'all can easily find yourself backed up. I solution is multiple queues, with uploaded files distributed to queues based on a proper noun hash; a multi-shard Kinesis topic gives you this hashing by pattern.

In a hereafter post I'll swoop into these scenarios; for now I merely want to make y'all enlightened that they exist and should be considered in your compages.

What if the file'due south also big to exist transformed past a Lambda?

Lambda is a neat solution for asynchronously processing uploads, simply information technology'southward non advisable for all situations. It falls down with large files, long-running transformations, and many tasks that require native libraries. One place that I personally ran into those limitations was video transformation, with files that could exist upwards to 2 GB and a a native library that was not available in the standard Lambda execution environment.

There are, to be sure, means to work-around all of these limitations. But rather than shoehorn your big, long-running chore into an environment designed for curt tasks, I recommend looking at other AWS services.

The offset place that I would plough is AWS Batch: a service that runs Docker images on a cluster of EC2 instances that can be managed by the service to encounter your performance and throughput requirements. You can create a Docker image that packages your application with whatever third-party libraries that it needs, and apply a bucket-triggered Lambda to invoke that prototype with arguments that place the file to be processed.

When shifting the file processing out of Lambda, you have to give some thought to how the overall pipeline looks. For example, do you use Lambda just equally a trigger, and rely on the batch job to motility the file from staging bucket to archive bucket? Or do you have a second Lambda that's triggered past the CloudWatch Event indicating the batch job is washed? Or do you use a Footstep Function? These are topics for a future mail.

Wrapping upwards: a skeleton application

I've made an example project available on GitHub. This example is rather more complex than simply a Lambda to process files:

The core of the project is a CloudFormation script to build-out the infrastructure; the project's README file gives instructions on how to run it. This example doesn't use whatever AWS services that have a per-hour accuse, but you volition be charged for the content stored in S3, for API Gateway requests, and for Lambda invocations.

Source: https://chariotsolutions.com/blog/post/two-buckets-and-a-lambda-a-pattern-for-file-processing/

Post a Comment for "can S3client from region upload to bucket of another region?"